Ekimetrics’ client, a leader in the insurance sector, wanted to improve management of its churned customers as part of a much broader corporate initiative: to adapt its positioning from a financial assets company into a service company, allowing its policy holders to better manage their personal risk .

A data-driven approach is therefore an integral part of their long-term corporate vision and one of the key factors for project success as identified by Ekimetrics. It involves a real paradigm shift for the insurer and its teams around the world.

Most companies only have a partial vision of their customers’ journey, due to either resource constraints or availability of data.

At Ekimetrics, we believe that building a holistic view of the client journey requires first and foremost a thorough understanding of the company’s business line and business sector, which allow for identification of the key interactions marking the various stages experienced by clients.

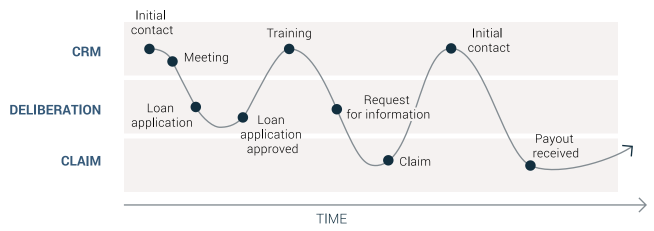

In the beginning of the engagement, a series of interviews and meetings with employees identified three main groups of client interactions during the life of an insurance policy.

Requests for risk coverage are typically followed by a insurer decision-making stage, e.g. decision to accept/deny the risk coverage request depending on the risk levels. This step requires various information exchanges between the insurer and its clients and in-depth evaluation of multiple criteria and outcomes (e.g. conditional acceptances, range of possible scenarios, debtor’s financial health, macro-economic factors, etc.), contributing to the complexity of the journey and the data to be manipulated.

These interactions involve day-to-day management, penalties, claims tracking and marketing events. As part of their current CRM strategy, a range of predefined actions impact the customer experience throughout the contract (e.g. three-month training, six-month sales visit, information requests). This provided an opportunity to collect CRM data which was not realized, as account managers had not been consistently entering data into the CRM tooling.

Claims are delicate situations that require a case-by-case approach, with numerous possible scenarios/outcomes: reimbursement in instalments, total or partial reimbursement, negotiated reimbursement times, etc. This is a crucial aspect of the client relationship that has a significant impact on satisfaction, which will be outlined further below.

The exploration of these three groups of interactions soon revealed that the client journey was not linear from one client to the next, generating further complexity. The key learning was that there is no isolated event with a drastic impact on churn, but that the customer experience could ultimately be affected significantly by a series of apparently inconsequential events.

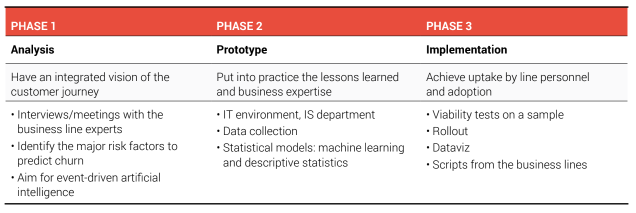

A technological solution would have been complicated to construct, since each organisation had business line specific characteristics, various risk factors and differing contractual vs non-contractual business models (soft churn). Given the complications, starting at business line level to construct an ad-hoc solution was much more likely to succeed and therefore we adopted a tailor-made approach.

The insurance sector is relatively advanced in keeping up with the latest statistical techniques, therefore it was apparently to most stakeholders that management of attrition should be based on the establishment of a dynamic score.

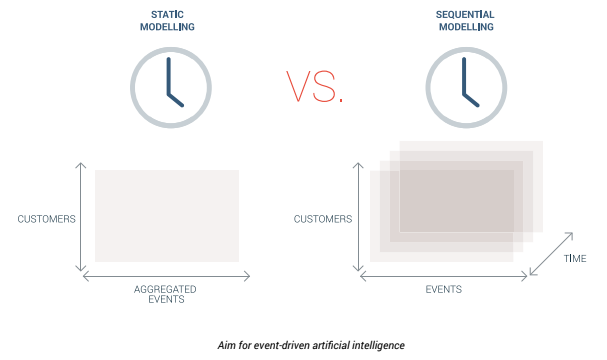

It is important to note that a key success factor was the ability to statistically capture changes in client satisfaction based on interactions over the life of the policy, which meant the following two aspects needed to be fully captured: chronological nature of events (the order of events is important) and dynamic scoring (the score must be continuous ). It was therefore logical to approach the project as the reconstruction of a story: the story of client behaviour, with all its unforeseen twists, whether positive or negative.

The goal of the project was to enabling the insurer to improve the client relationship at the times when it really counts and to reinvent pre-existing processes with departments where necessary.

Many scoring methods are available, however some are more suitable than others in terms of business interpretation. For example, machine learning approaches are particularly difficult to translate; making them workable for business lines is often a challenge.

While most good data providers can deliver analytically powerful results, few manage to make these deliverables legible and functional in a business context and are unable to answer the question of: what do I need to change in my daily life or strategy to solve my problem?

This is where the concept of value creation comes into its own. The final step – translation of insights into business line value – remains a challenge for most data/advanced analytics providers in 2019.

From the outset, we made ensured that our approach was adopted by teams, both upstream and downstream – a vital prerequisite for garnering support for the project and creating tangible value.

The prerequisite for recovering data is to connect to the information systems in order to access, collect and begin exploring the data. It is important to be flexible at this stage, since there are as many IT systems as there are companies!

It is necessary to have in-depth understanding of the environment of each Cloud provider (AWS, Azure, GCP), to juggle the various technologies and build an IT environment that will serve the business objective in the short and medium term. This step can take from a few weeks to a few months, depending on the nature of the databases available and their suitability for the intended objective.

To avoid bottomless pit syndrome, the approach favoured by Ekimetrics is to prioritise time-to-market for the initial results, which meant that stakeholders were convinced though concrete proof, and findings were based on an iterative and agile approach.

As with a car, no statistical model or algorithm works effectively without fuel. The data injected into the model should be chosen very carefully and be targeted based on a direct link to a specific business line problem. The variety, completeness, quality and quantity of the data impact the selection and relevance of a specific algorithm.

For the insurer, client data was naturally at the centre of the equation. We explored the accessibility, relevance and strength of the transactional data (purchasing behaviour), activity data (behaviour at each stage of the conversion tunnel such as site navigation, etc.), and customer service relationships data (complaints, etc.).

Since some data could not be used, we settled on decision-making data, commercial data (account manager interactions) and data concerning claims/complaints handling, which were reliable enough to identify a complete and realistic client journey. If none of this data was available, then the project may have been unworkable before it has even started. A data scientist’s creativity in identifying the right data or finding ways to obtain it is a key skill.

Quantity also matters. To ensure the reliability of our statistical models, it was necessary to check the volume of data available. In the case of this insurer, we harvested several hundred thousand data items, all necessary prerequisites for training the model, to enable it to draw credible and reliable conclusions.

Finally, the structure of the accessible data also influences the choice of algorithm. Deep learning, for example, requires a wealth of data on several dimensions, such as time, clients, events, etc. The unavailability of certain data immediately eliminates the possibility of choosing certain machine learning approaches, although that was not the case for our client.

Machine learning: a neural network to tackle complexity

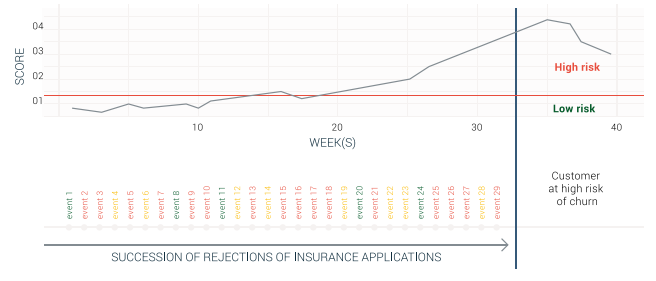

We have already discussed the complexity of the client journey, superimposing several layers of interactions by different departments and the creation of numerous possible scenarios depending on the type of events (positive decision, refusal, policy renewal subject to conditions, etc.).

We therefore chose to take a neural network approach (via a variant called “RNN” – Recurrent Neural Network), a type of model able to capture temporality and to capture the chronology of key moments, as well as to determine the positive or negative impact of events on customer satisfaction.

We deployed a dynamic approach to scoring, capable of evolving over time. This deep learning approach was the most appropriate to carry out this multi-dimensional analysis; traditional scoring would have required a massive amount of manual effort to create variables and would not have been fundamentally capable of building a client story. A deep learning approach, on the other hand, is powerful enough to capture the chronology and positive and negative influences on behaviour.

Descriptive statistics: bringing clarity to the black box

As with any neural network approach, however, there is an issue of interpretation as it is difficult to distribute contribution to the individual events . To maintain a certain clarity and encourage a consistently business-oriented message, we incorporated more descriptive statistics and very transparent models (linear regression, decision trees, etc.). This hybrid usage of statistical methods, support from the Executive Committee, adoption by operational teams, and quality controls (consistency checks, etc.) enabled us to achieve a subtle balance between precision of results and tangible business value and to propose realistic action plans.

As discussed at the start of this case study, it was imperative for the insurer that this project would not be simply another POC to be filed away.

In the context of the transition in the insurer’s positioning from an “assets” company to a “client-oriented service” company, it was crucial that the results of this analysis be implemented and used by local teams as part of their effort to improve client experiences across the company.

We therefore developed tactical but highly practical implementation steps to bridge between the strategic vision through data science and the day-to-day lives of the operational teams. Here are some examples:

An example is constructed below:

Data Science provided the common thread between the development of the relational promise , the choice of use cases in support of this new brand positioning and eventual implementation. In addition to statistical models, we believe in the importance of implementing new, sustainable data and analytical capacities; leading to an ecosystem of data solutions, new analytical frameworks and new business line processes to promote a better understanding of client expectations.

—

Article co-written with the EBG team as part of The Digital Benchmark, and delivered in Berlin in May 2019 to more than 800 CMOs, Marketing Directors, Data Directors and Customer Experience Directors.

Read more about The Digital Benchmark

—

> Subscribe to the data science & AI newsletter!Thought Leadership

Thought Leadership